What is Data Integration?

Data integration involves combining data from different sources into a single system. It’s a vital step for any organization that wants to make sure its data is consistent, accessible, and accurate. In the context of this data integration meaning, a key step is breaking down data silos. By preventing this kind of data segmentation and unifying disparate information, an organization can have all of its data in a central location and in a form that people and systems can use to derive effective, actionable business intelligence insights.

For example, when working with IBM i data sources, you may want to bring your data into a centralized data warehouse. One common misconception about IBM i integrations is that IBM i is inflexible, making integration difficult, but this isn’t true. With data integration, you can enable a range of business processes to access and integrate IBM i and other business-critical data in their workflows.

Another core component of this data integration definition involves using an EDI solution that enables software to access your unified data. You make this possible by bringing data into a data warehouse, and then, once it’s there, various applications can access and use it.

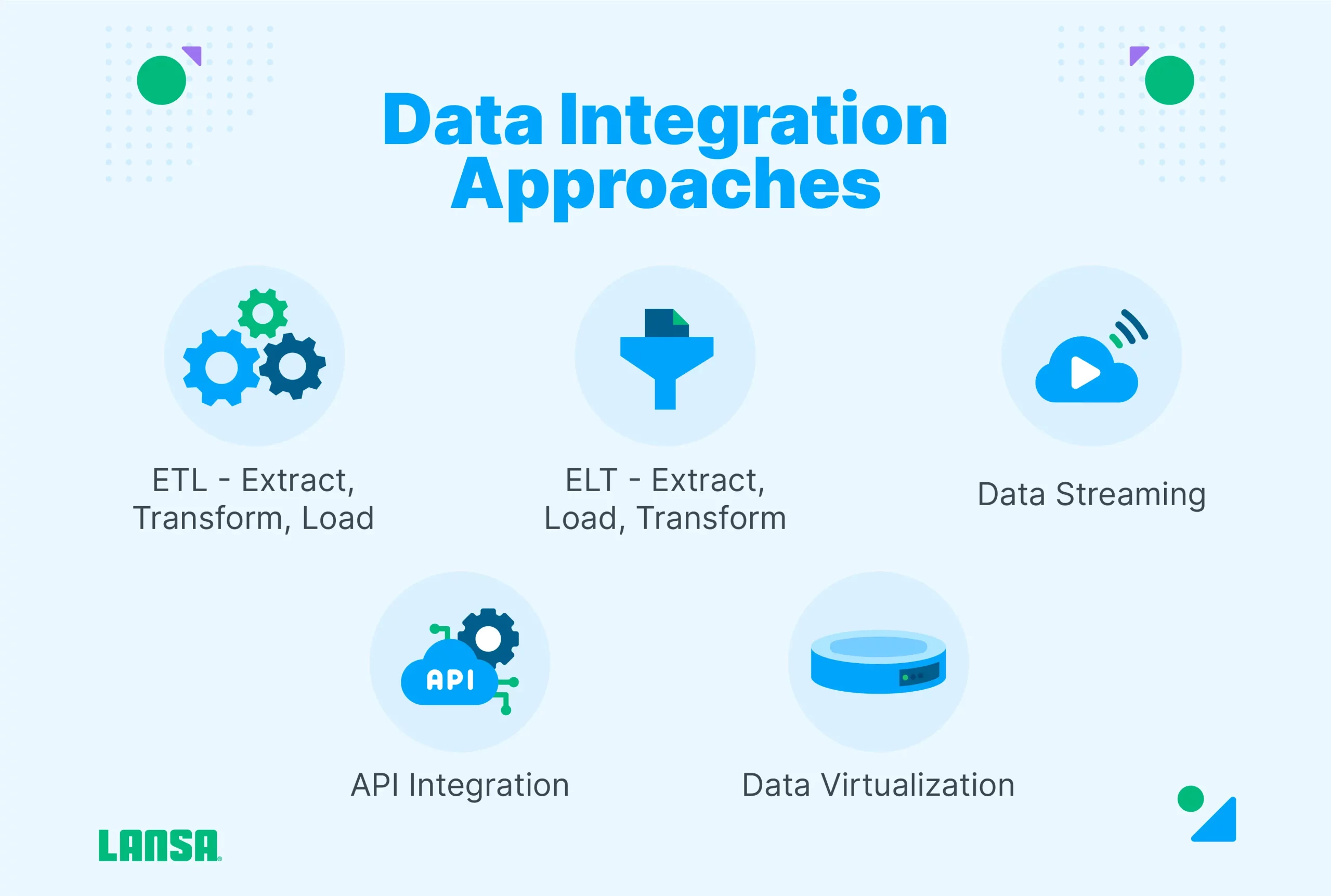

Five Data Integration Approaches

Here are the five types of data integration approaches:

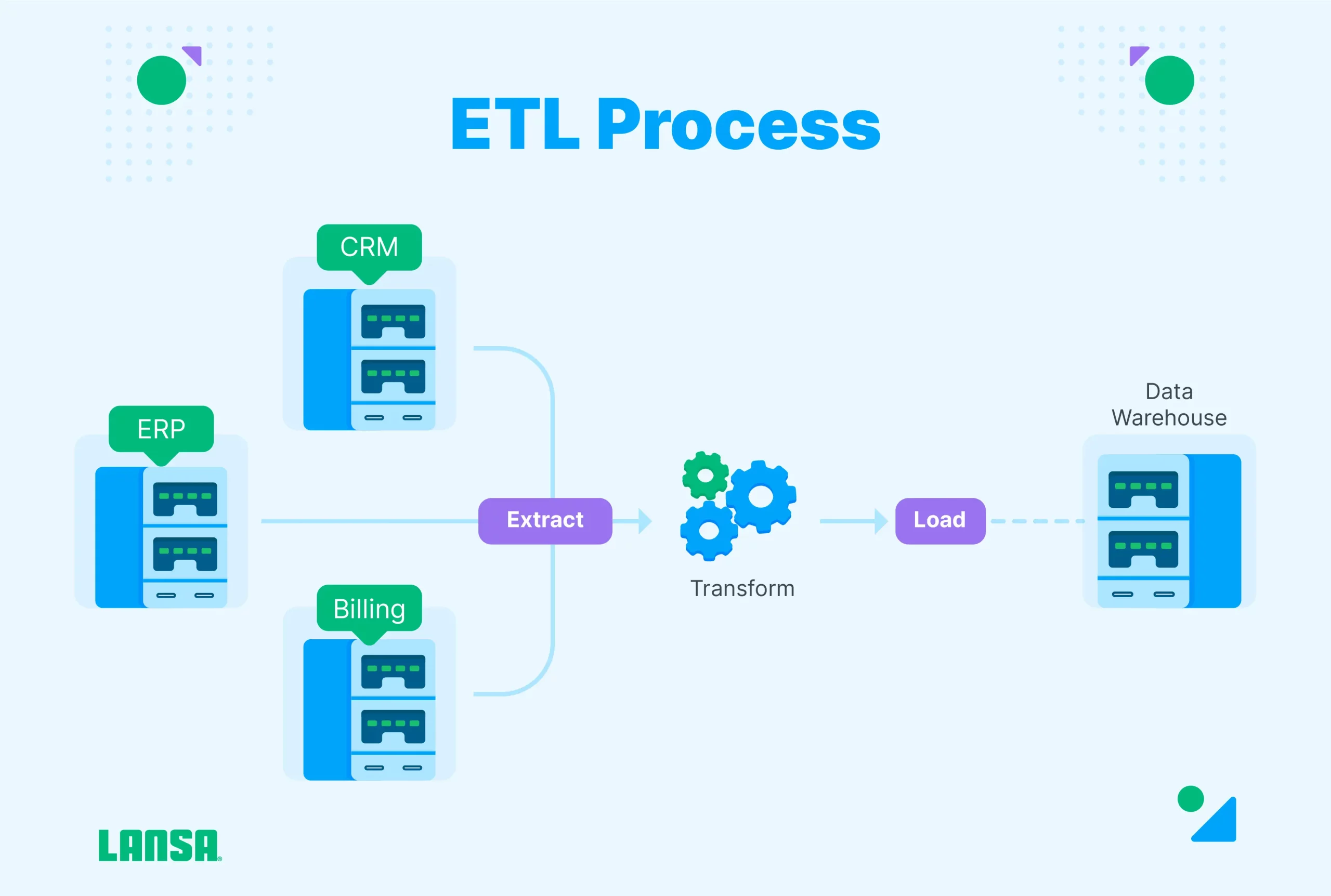

ETL (Extract, Transform, Load)

ETL involves converting raw data to prepare it for a target system by extracting it from the source application, then transforming it, and then loading it into the target application. The transformation happens in a staging area, and after the transformation is complete, the data gets loaded into a target repository, such as a data warehouse. This makes analysis by the target system faster and more accurate. ETL is best suited for smaller datasets that require complicated transformations.

- Data is extracted from source systems

- Transformed using predefined rules

- Loaded into your target system

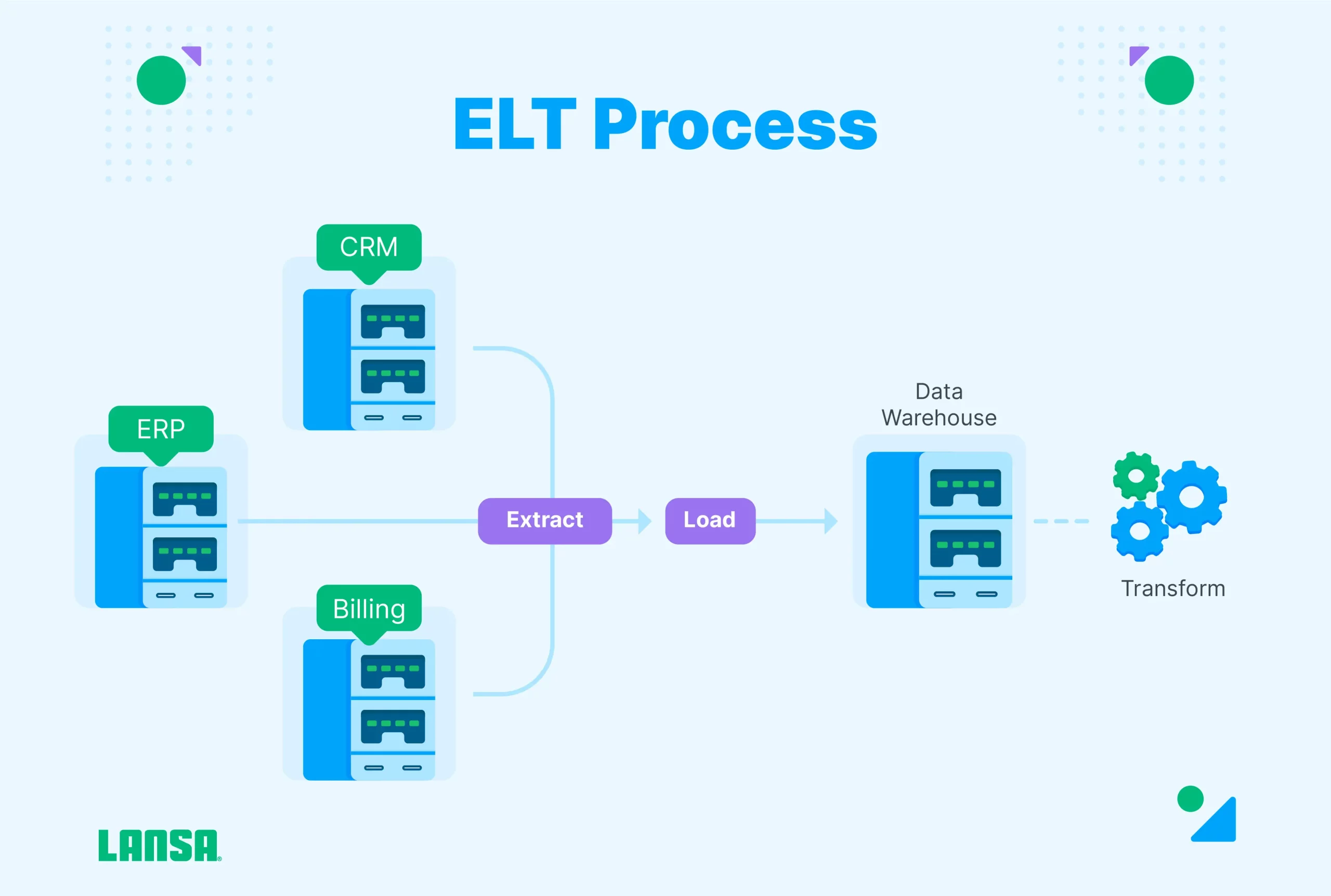

ELT (Extract, Load, Transform)

In an ELT system, the system loads the data and then transforms it inside the target system, which could be a data lake or warehouse in the cloud. This works best for large datasets because the loading process is often faster.

Systems typically use either a micro-batch or change data capture (CDC) approach. With micro-batch ELT, only the data that’s been modified since the last load gets loaded. CDC is different in that it continually loads data as it changes in the source.

- Data is extracted from source systems

- Loaded into your target system

- Transformed using predefined rules

Data Streaming

Data streaming moves data continuously, in real-time, from the source to the target, instead of loading it in batches Data integration platforms use data streaming to provide data warehouses, lakes, and cloud platforms with data ready for analytics.

- Processing

- Delivering in real-time continuously

- Enabling instant insights and decisions

Application Programming Interface (API) Integration

With application programming interface (API) integration, you move data between different applications so the API can support the functions of the target app. For instance, your human resources system may need the same data as your finance solution. In this case, the application integration would make sure that the data coming from the finance app is consistent with what the HR system needs.

Typically, the systems sharing information have their own APIs used for sending and receiving data, which paves the way for using SaaS automation tools to streamline the integration.

Data Virtualization

Data virtualization refers to a data management technique that gives you the ability to access and manipulate data without having to store a physical copy of it in a single repository [2].

Data virtualization is similar to streaming in that it also provides data in real-time. When an application or user requests data, the virtualization process makes it available by gathering it from disparate systems. This makes virtualization a good fit for systems that rely on high-performance query processes.

Using data virtualization, you also have the freedom to easily and quickly make adjustments to the source of the data or according to changes in business logic.

Data Integration Process

Gather Requirements

While you gather requirements for your data integration, your focus should be on the goals your company wants to accomplish. You then decide which technical, human, and monetary resources you need to meet these goals.

For example, a manufacturer may want to collect data from different machines on the factory floor. The company’s goal may be to better understand the production rate of each machine, as well as the maintenance requirements so decision-makers can choose which machines to purchase, each unit’s cost of ownership, and how each one contributes to the company’s bottom line. The company also wants to use the production data from the machines to design a more efficient inventory management system, linking the material ordering process to the overall production rate.

Each machine already produces data through its operational software. But to unify this data and make it useful, data architects have to make sure it’s in a format that a centralized system can understand and store. This may necessitate the following requirements:

- A data cleaning and transformation application

- People who understand how to maximize the effectiveness of this software

- A data storage system that keeps data in the formats decision-makers and the inventory management system can use

- If the company doesn’t already have one, an enterprise resources planning (ERP) solution that can integrate this data into several systems, including inventory management

Once these requirements have been established, the company is ready to dig into the details of the data they’re trying to integrate.

Data Profiling

Data profiling is when you analyze the source data to better understand its quality, structure, and unique characteristics. Also, during the data profiling phase, you identify challenges that could arise.

In addition, you make sure that the data you need meets your standards. For example, if the data you’re trying to integrate is sparse or inconsistent, you may not be able to use it to make certain mission-critical decisions.

Design

During the design process, you establish a blueprint that your organization uses as it implements its automated data integration. In this step, you:

- Perform data mapping, to show how data will move from its source to where it needs to go [1]

- Decide which type of data transformation you need to establish, such as data cleaning, smoothing, or aggregation

- Figure out the relationships between different types of data and the systems that will use them after the integration

The design process serves as your roadmap, a north star you can refer to as your integration takes shape. At the same time, it needs to be a somewhat flexible document. This is because, as the integrations develop, stakeholders may discover new ways of using your data or find alternative applications that could leverage it in novel ways.

Implement

The implementation phase is when you put your design and profiling work into action. As discussed later, this could involve Extract Transform Load (ETL), Extract, Load Transform (ELT), data streaming, virtualization, API integration, or other processes.

As you implement your system, you want to pay attention to:

- Whether your data map enables the capabilities it was intended to make possible

- How your data transformation performs. Does it truly position your data to be an effective tool, or do you need to transform it differently?

- The systems that depend on this data. How are they benefitting from the implementation? Also, how does their improved performance align with your higher-level goals?

Verify, Validate, and Monitor

Once you’ve finished your implementation, you need to:

- Verify that the data has been fully integrated into the systems that need it. There may still be silos you need to break down.

- Validate your system’s effectiveness, answering questions such as: Is the data accurate? Is it consistent, or does it vary too much to be useful? Does it have the kind of structure it needs to, or does this have to be refined?

- Monitor your implementation to see how it performs over time. Monitoring may also involve gathering feedback from stakeholders about how the integration makes their jobs easier or impacts productivity across your organization.

By verifying, validating, and monitoring, you take an introspective approach to your data integration. This opens the opportunity to continually adjust your system so it’s increasingly useful as time goes on.

These steps also make your system more flexible. For example, your company may choose to create a new product or service that could benefit from a different type of integration. Or there may be a new performance management system that may necessitate an adjustment to your data integration.

A Real-world Example of Data Integration in Action

Data integration is one of the primary building blocks in the surging success of Starbucks.[3] It uses its Starbucks Rewards Program in its mobile app as a data harvesting machine. It then takes the information gleaned through the app and integrates it with systems used to make strategic decisions.

For example, Starbucks takes the data from a single customer’s purchase habits as recorded in its rewards system. The company then integrates this data with its sales system, using it to design personalized promotions for specific customers.

Another component of Starbucks’ digital infrastructure that integrates with its data is its cloud-based AI engine. The company has designed this to use data integrated from the rewards app to recommend specific kinds of coffee, hot chocolate, and other beverages and goodies to individual customers.

Data Integration Strategies for Businesses

There are several ways to approach data integration, and the one you choose will depend on your resources, goals, and strategy.

Manual Data Integration

Manual data integration is when a human collects, loads, and transforms data themselves. The human — or their team — then establishes the data in a centralized location.

Manual data integration often depends on spreadsheets or scripts the person writes as they merge the data they need to integrate.

Middleware Data Integration

With middleware integration, you use software or a platform that serves as an intermediary during the process. The software facilitates the communication of data and the ways different systems and applications exchange it.

Application-based Integration

Like middleware integration, application-based integration also uses software, but it comes with preset integration tools. The applications have been designed to work well with other software solutions. Reducing the number of manual steps is one of the biggest challenges of business process integration, but application-based integration removes much of the manual coding that often slows down an integration initiative.

Uniform Access Integration

Uniform access integration uses a unified interface that continually integrates data from different sources. It presents a unified view of all data that different stakeholders can access and use as they wish.

Common Storage Integration

A common storage integration system consolidates data from different sources, storing it in a repository such as a data warehouse or lake. Like a uniform access integration solution, a common storage system is searchable, making it easier for users to find the information they’re looking for.

4 Key Data Integration Use Cases

While the number of data integration use cases is literally limitless, here are four of the most common: data ingestion, replication, warehouse automation, and big data integration.

Data Ingestion

Data ingestion involves shifting data from a number of sources to a specific storage location, such as a data lake or warehouse. The ingestion can happen in real time or using batches. In most cases, the data needs to be cleaned and standardized so it’s ready for use by a data analytics tool.

Data Replication

During data replication, data gets copied and moved between systems; for example, from a database in a data center to a cloud-based data warehouse. Data replication is useful for making sure critical information gets backed up and synchronized with your business processes.Replication can happen in data centers/in the cloud and can be done in bulk, in batches or in real-time.

Data Warehouse Automation

The data warehouse automation process automates the lifecycle of the data warehouse, so analytics-ready data is available sooner. All of the steps involved in the data warehouse lifecycle, such as modeling, mapping, ingestion into data marts, and governance are automated so information is ready for use by an application sooner.

Big Data Integration

Managing in moving the large amounts of structured and unstructured data involved in big data applications requires special tools. In a successful big data integration, your analytics tools gain a comprehensive view of your operations. This requires intelligent pipelines that can automate the process of moving, consolidating, and transforming big data from several sources. To accomplish this, a big data integration system needs to be scalable and be able to profile and ensure the quality of data as it streams in real-time.

Data Integration Benefits

Data integration often provides a healthy return on investment thanks to the following benefits.

Accuracy and Trust (Error Reduction)

Data integration provides you with a consistent, reliable system for providing data to systems and decision-makers who need it. By using automated solutions, you can reduce incidents of human error, leaving you with more dependable data.

Data-driven & Collaborative Decision-making

When you integrate data, you give users a comprehensive picture of data from a range of sources. This increases their visibility into the numbers they need to make more effective strategic decisions. Since the data goes to a common repository or system, it’s also easier for individuals to collaborate and team up to derive insights using the same pool of info.

Efficiency and Time-saving

When you automate your data integration, you create efficient ways of collecting, transforming, storing, and using one of your most powerful assets: information. This also frees up employees, analysts, and others to focus on other value-adding responsibilities.

Simplified Business Intelligence

A business intelligence system empowered with data integration has a constant feed of trustworthy information it can use to help encourage growth. When you bring automation into the mix, stakeholders have more cognitive bandwidth to conceive winning strategies instead of muddling through manual data collection and processing tasks. In the context of data engineering vs data integration, business intelligence is where the two combine forces. Data engineers use integration to make it easier and more efficient to derive business insights.

Application Integration vs Data Integration

Application integration is different in that it focuses only on connecting software applications. An application integration solution may simply form a link between two different types of software.

Data integration can do more because it not only forms a data link between software, but it also handles the transfer, transformation, and storage of the information. One typical use case id supporting BI and analytics tools.

Key Takeaways

Data integration gives decision-makers and applications access to clean, reliable, transformed data they can use for business intelligence and to foster growth. Although you can perform data integrations manually, businesses often use automated data integration tools to save time and boost reliability. By integrating data across several applications, you can also empower those systems to automatically perform actions and assist in decision-making.

References

[1] E. Rezig, “Technical Report: An Overview of Data Integration and Preparation,” Vijay.mit.edu, May 2020. https://vijayg.mit.edu/sites/default/files/documents/2020_DataIntegrationReport.pdf

[2] “What is Virtualization? | IBM.” https://www.ibm.com/topics/virtualization

[3] C. Ogombo, “Starbucks blends the perfect coffee using data analytics,” Feb. 24, 2023. https://www.linkedin.com/pulse/starbucks-blends-perfect-coffee-using-data-analytics-collins-ogombo/